Don't install software, define your environment with Docker and Pipeline

|

This is a guest post by Michael Neale, long time open source developer and contributor to the Blue Ocean project. |

If you are running parts of your pipeline on Linux, possibly the easiest way to get a clean reusable environment is to use: CloudBees Docker Pipeline plugin.

In this short post I wanted to show how you can avoid installing stuff on the agents, and have per project, or even per branch, customized build environments. Your environment, as well as your pipeline is defined and versioned alongside your code.

I wanted to use the Blue Ocean project as an example of a project that uses the CloudBees Docker Pipeline plugin.

Environment and Pipeline for JavaScript components

The Blue Ocean project has a few moving parts, one of which is called the "Jenkins Design Language". This is a grab bag of re-usable CSS, HTML, style rules, icons and JavaScript components (using React.js) that provide the look and feel for Blue Ocean.

JavaScript and Web Development being what it is in 2016, many utilities are need to assemble a web app. This includes npm and all that it needs, less.js to convert Less to CSS, Babel to "transpile" versions of JavaScript to other types of JavaScript (don’t ask) and more.

We could spend time installing nodejs/npm on the agents, but why not just use the official off the shelf docker image from Docker Hub?

The only thing that has to be installed and run on the build agents is the Jenkins agent, and a docker daemon.

A simple pipeline using this approach would be:

node {

stage "Prepare environment"

checkout scm

docker.image('node').inside {

stage "Checkout and build deps"

sh "npm install"

stage "Test and validate"

sh "npm install gulp-cli && ./node_modules/.bin/gulp"

}

}This uses the stock "official" Node.js image from the Docker Hub, but doesn’t let us customize much about the environment.

Customising the environment, without installing bits on the agent

Being the forward looking and lazy person that I am, I didn’t want to have to go and fish around for a Docker image every time a developer wanted something special installed.

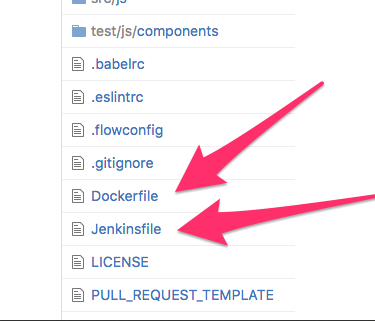

Instead, I put a Dockerfile in the root of the repo, alongside the Jenkinsfile:

The contents of the Dockerfile can then define the exact environment needed

to build the project. Sure enough, shortly after this, someone came along

saying they wanted to use Flow from Facebook (A

typechecker for JavaScript). This required an additional native component to

work (via apt-get install).

This was achieved via a

pull

request to both the Jenkinsfile and the Dockerfile at the same time.

So now our environment is defined by a Dockerfile with the following contents:

# Lets not just use any old version but pick one

FROM node:5.11.1

# This is needed for flow, and the weirdos that built it in ocaml:

RUN apt-get update && apt-get install -y libelf1

RUN useradd jenkins --shell /bin/bash --create-home

USER jenkinsThe Jenkinsfile pipeline now has the following contents:

node {

stage "Prepare environment"

checkout scm

def environment = docker.build 'cloudbees-node'

environment.inside {

stage "Checkout and build deps"

sh "npm install"

stage "Validate types"

sh "./node_modules/.bin/flow"

stage "Test and validate"

sh "npm install gulp-cli && ./node_modules/.bin/gulp"

junit 'reports/**/*.xml'

}

stage "Cleanup"

deleteDir()

}| Even hip JavaScript tools can emit that weird XML format that test reporters can use, e.g. the junit result archiver. |

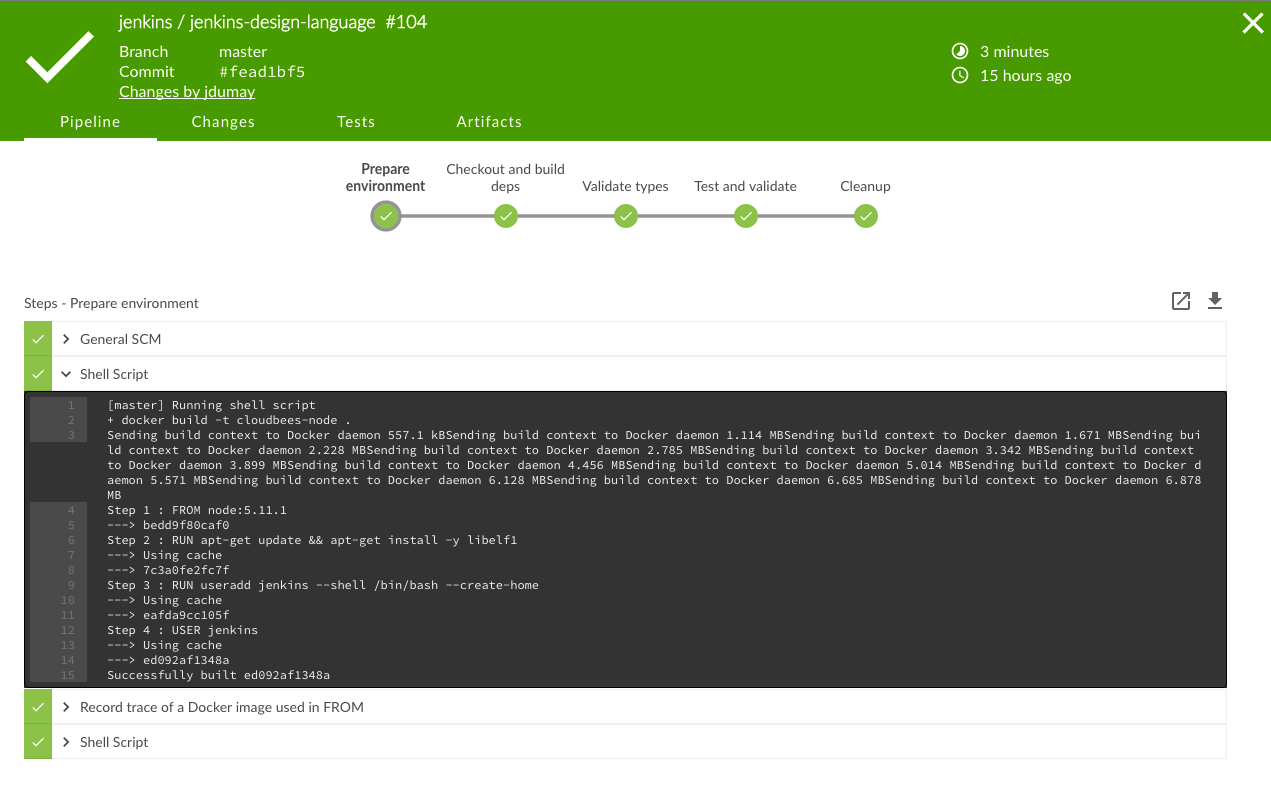

The main change is that we have docker.build being called to produce the

environment which is then used. Running docker build is essentially a

"no-op" if the image has already been built on the agent before.

What’s it like to drive?

Well, using Blue Ocean, to build Blue Ocean, yields a pipeline that visually looks like this (a recent run I screen capped):

This creates a pipeline that developers can tweak on a pull-request basis, along with any changes to the environment needed to support it, without having to install any packages on the agent.

Why not use docker commands directly?

You could of course just use shell commands to do things with Docker directly,

however, Jenkins Pipeline keeps track of Docker images used in a Dockerfile

via the "Docker Fingerprints" link (which is good, should that image need to

change due to a security patch).

Links

-

The project used as as an example is here

-

The pipeline is defined by the Jenkinsfile

-

The environment is defined by the Dockerfile

-

-

Read more on Docker Pipeline